Generative AI Consulting: From ChatGPT Pilots to Production Deployment

Generative AI Consulting: From ChatGPT Pilots to Production Deployment

Generative AI consulting has emerged as one of the fastest-growing professional services markets in the UK, driven by widespread organisational uncertainty about deployment rather than technological capability limitations. The UK AI sector generated £23.9 billion in revenue in 2024, a 68% year-on-year increase, with over 5,800 AI companies employing 86,139 people. The global AI consulting market reached $11.07 billion in 2025 and is projected to hit $90.99 billion by 2035 at a 26.2% compound annual growth rate. Yet despite this explosive market growth, evidence reveals a critical disconnect: 95% of generative AI pilots fail to produce measurable profit-and-loss impact, and consultant-led implementations succeed at double the rate of internal builds. This guide provides UK business leaders with a practical framework for understanding when to consult, whom to consult, and how to evaluate consulting engagements likely to deliver measurable return on investment.

67%

Consultant-Led Success

Gen AI implementation outcomes

33%

Internal Build Success

Without external consultation

£321K

Average Failed Implementation

Cost to UK SMEs

95%

Pilot Failure Rate

Lack measurable P&L impact

Sources: Gartner AI Implementation Study 2026, McKinsey AI Consulting Report 2025, Bookipi SME AI Adoption 2026

Generative AI Consulting vs. Traditional AI Consulting

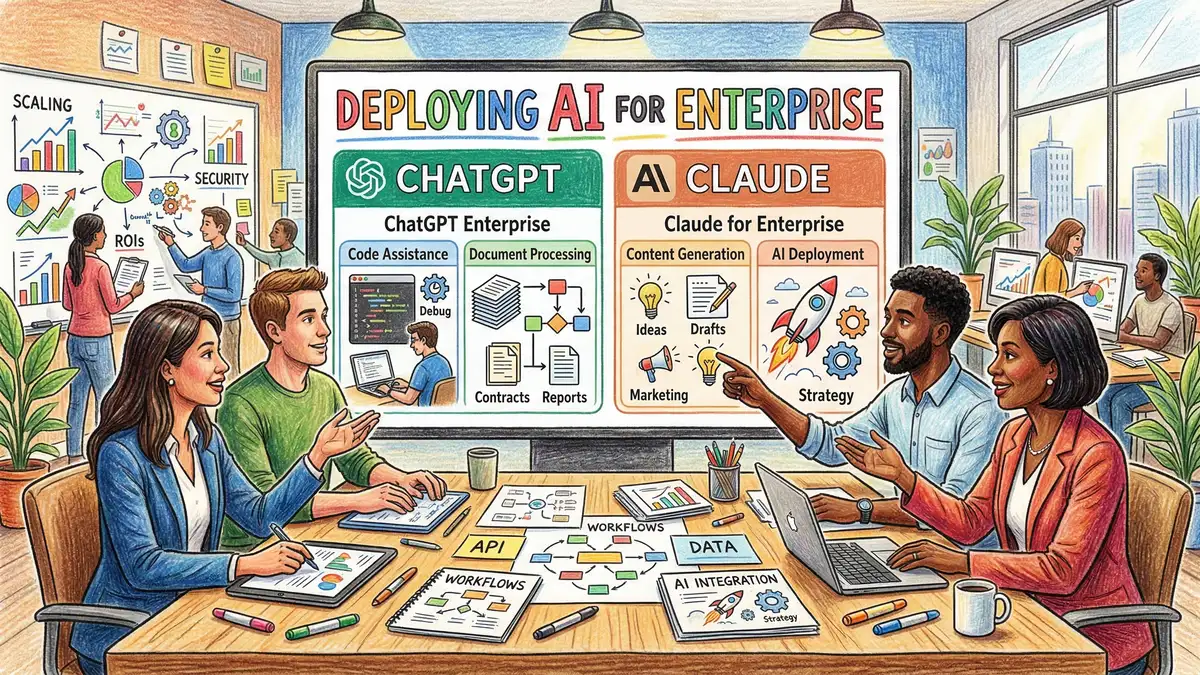

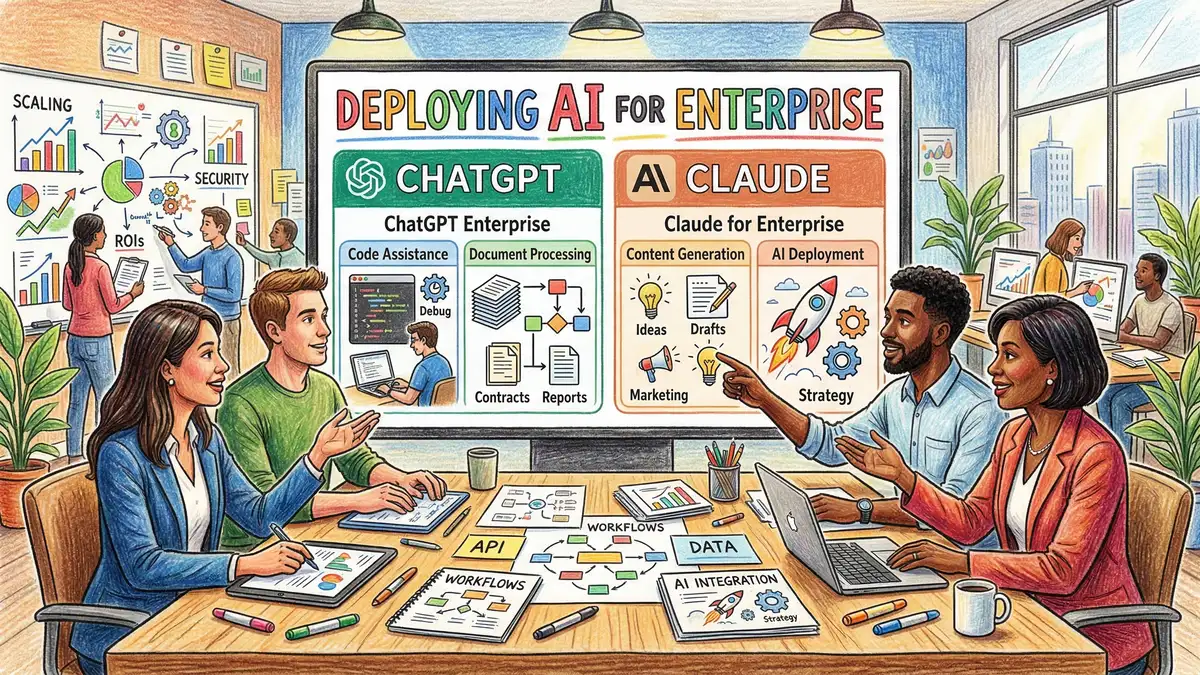

Generative AI consulting differs fundamentally from traditional AI consulting in scope, timeline, skill requirements, and deliverable expectations. Traditional AI consulting focuses on building custom machine learning infrastructure—data pipelines, predictive models, statistical algorithms—typically deployed over 6-12 months with significant data science team investment. Generative AI consulting focuses on rapid deployment of large language models and multimodal systems to solve immediate business problems, typically deployed over 2-8 weeks using pre-trained models and prompt engineering rather than model training.

Key Takeaway

Generative AI consulting differs fundamentally from traditional AI by focusing on rapid deployment of pre-trained models to solve immediate problems. Consultant-led implementations achieve 67% success compared to 33% for internal builds, primarily because consultants bring established deployment patterns and organisational change management expertise.

This distinction explains why consultant-led generative AI implementations succeed at double the rate of internal builds. Consultants bring established deployment patterns that work across organisations, pre-built integrations with common business systems, and proven change management approaches that account for how teams actually adopt new tools. Internal teams, building from scratch, typically underestimate integration complexity, struggle with security and compliance requirements, and implement changes without accounting for user adoption friction.

The Most Valuable Generative AI Use Cases

Generative AI consulting demand clusters around a well-defined set of use cases that have proven value delivery consistency and manageable implementation complexity across diverse organisations. Understanding which use cases deliver genuine ROI—and which remain speculative—is essential when evaluating consulting engagements.

Customer Service Automation

The most prevalent use case globally and within the UK is customer service automation through generative AI chatbots and intelligent routing systems. UK contact centres have deployed generative AI chatbots to handle routine inquiries without human intervention, provide 24/7 support availability, personalise customer interactions based on browsing history and lifecycle stage, and automatically categorise customer comments and feedback for escalation and quality assurance.

The business case for customer service chatbots is compelling: organisations typically achieve 20–40% reduction in average handle time for routine interactions, 70%+ self-service resolution rates for common inquiry categories, and 10–15% overall reduction in contact centre operating costs. Cox Automotive, the world's largest auto services provider, integrated Claude-powered AI agents across its dealer network and reported that consumer lead responses and test drive appointments more than doubled, with dealer website content creation time falling from weeks to same-day.

Content Generation and Marketing

Content generation represents the second most valuable use case—generative AI creating product descriptions, marketing copy, email campaigns, and social media content. This use case delivers consistent ROI primarily through volume and speed rather than perfection. Marketing teams can generate hundreds of product variations or email subject lines and A/B test them; generative AI systems can produce in minutes what previously required days of copywriter time. Organisations report 40–60% reduction in content creation timelines and 15–25% improvement in engagement metrics when AI-generated variations are A/B tested against traditionally created content.

Code Generation and Developer Productivity

Code generation through GitHub Copilot, Claude, and similar tools has demonstrated consistent productivity improvement in development teams. Research from Microsoft and independent studies shows 30–55% improvement in coding speed and 25–35% improvement in first-time correctness when developers use generative AI assistants. This translates directly to timeline acceleration for development projects and improved code quality. The implementation challenge is minimal—these are tools developers install locally—yet the organisational change challenge is significant because developers must learn new workflows and trust outputs from AI systems.

Why Generative AI Pilots Fail: The 95% Problem

The statistic that 95% of generative AI pilots fail to produce measurable profit-and-loss impact represents one of the most significant disconnects in current technology adoption patterns. Most organisations can build a working chatbot or content generation system. The problem is not technical capability; it is that successful pilots require far more than technical working. They require integration with business processes, user adoption, performance measurement, and organisational commitment that pilots rarely include.

The failure patterns cluster around several common causes. First is integration complexity that consumes budgets and timelines beyond initial estimation. A customer service chatbot requires integration with CRM systems, knowledge bases, ticketing systems, and payment processing—systems that typically lack documented APIs and require custom middleware. Integration work that was estimated at 20% of timeline consumes 60% of budget. Second is adoption friction where teams revert to previous processes because the AI system requires workflow changes they were not prepared for. A sales team trained to use traditional CRM systems may resist a new AI-powered prospecting tool because it changes how they qualify leads or manage pipelines.

Third is measurement inadequacy where organisations do not define success metrics clearly before pilots begin, making it impossible to assess whether the pilot succeeded or failed. Fourth is security and compliance gaps that emerge after deployment, requiring remediation that stalls adoption. Fifth is performance issues where the AI system produces inconsistent outputs and users lose confidence in its recommendations.

| Failure Mode | How Consultants Address It | Why It Matters |

|---|---|---|

| Integration Complexity | Pre-built connectors and integration patterns | Prevents timeline and budget overruns that derail projects |

| User Adoption Friction | Change management planning and user training | Teams using the system produces measurable value; teams reverting to old processes do not |

| Measurement Gaps | Define success metrics before deployment begins | Enables rigorous assessment of whether value was delivered |

| Security and Compliance | Integrate compliance requirements from start | Prevents systems that work but cannot be deployed in production |

| Performance Issues | Quality testing and output validation before handoff | User trust is prerequisite for consistent adoption and value |

Source: Analysis of consulting engagement outcomes and pilot failure patterns, 2025-2026

Evaluating Generative AI Consulting Providers

Consulting engagement quality separates successful AI implementations from expensive failures. Our generative AI consulting service combines deployment expertise with organisational change management.

Explore Our Consulting ApproachWhen evaluating generative AI consulting providers, several criteria distinguish firms likely to deliver value from those that will consume budget and time with limited measurable outcomes. First, examine whether the consultant has direct experience with your specific use case. A firm that has successfully deployed customer service chatbots for financial services organisations will solve your customer service challenge faster and more cost-effectively than a generalist firm learning your domain for the first time.

Second, assess whether the consultant brings pre-built integrations or integration patterns rather than building everything custom for your organisation. Does the firm have reference architectures? Do they maintain libraries of connectors? Can they reference similar projects they delivered in 4-6 weeks rather than consultants estimating everything as custom work? Custom work creates timeline uncertainty and budget risk; reference architectures create predictability.

Third, examine their change management and user adoption approach. Do they plan for how teams will actually adopt the system? Do they conduct user training? Do they measure adoption metrics? Firms that focus purely on building working systems without addressing adoption rarely see value realisation because teams revert to previous processes. Fourth, confirm their approach to measurement. Before engagement begins, the consultant should help you define success metrics and measurement approaches. If the consultant does not discuss measurement in the sales process, they are unlikely to measure value delivery after deployment.

Domain Expertise and Use Case Experience

Choose consultants who have deployed similar solutions in your industry. Domain expertise accelerates both technical implementation and organisational adoption because the consultant understands your workflows and compliance requirements.

Pre-Built Integration Patterns

Look for consultants who bring reference architectures and pre-built connectors rather than treating every engagement as fully custom. This reduces timeline uncertainty and budget risk significantly.

Structured Change Management

Confirm the consultant has explicit processes for user training, adoption planning, and organisational change management. Technical success means nothing if teams do not actually use the system.

Measurement and Value Documentation

Work with consultants who establish measurement frameworks before deployment begins. Success metrics should be defined, baselines established, and outcomes tracked systematically.

The Typical Generative AI Consulting Engagement Timeline

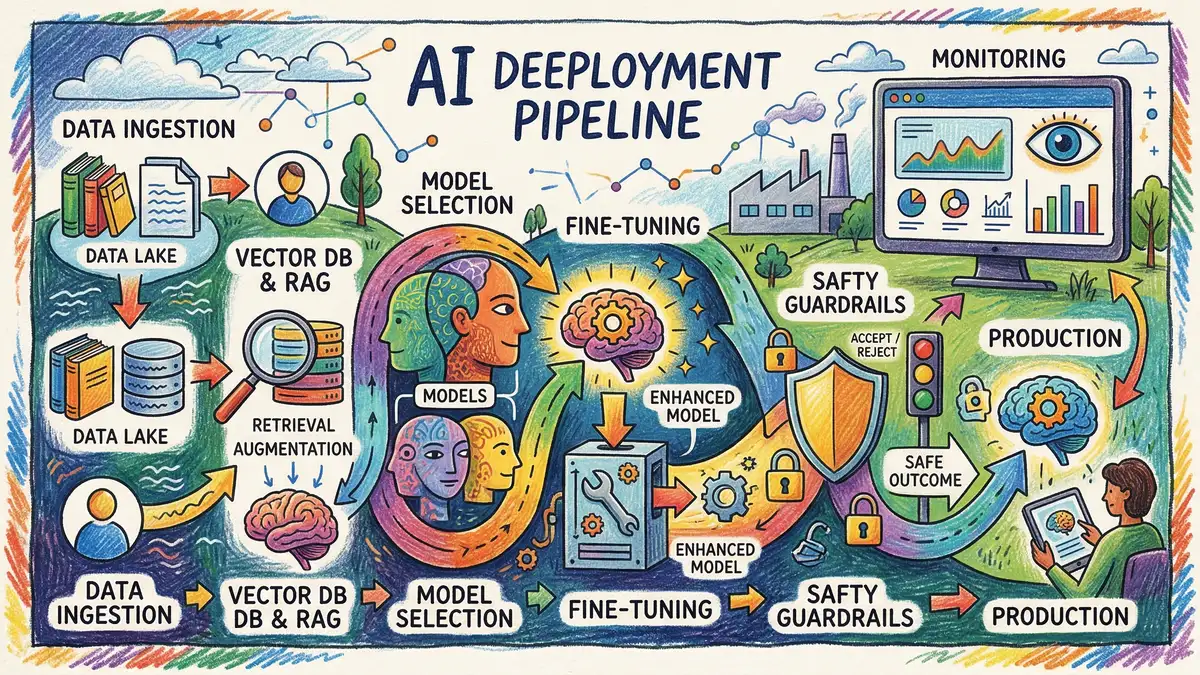

A typical consulting engagement for customer service automation or content generation extends 8–12 weeks from engagement initiation to production handoff. The first 1–2 weeks focus on discovery—understanding your current systems, defining success metrics, identifying integration requirements, and scoping work precisely. This phase is critical because scope clarity prevents timeline and budget creep later.

Weeks 2–6 focus on technical implementation—building integrations, configuring the generative AI system for your domain, testing outputs, and validating performance. This phase is where the consultant's domain expertise accelerates work significantly. Weeks 6–8 focus on testing and refinement—validating that outputs meet quality standards, that integrations function reliably, that security and compliance requirements are met, and that performance testing confirms the system can handle production load.

Weeks 8–10 focus on user training, change management planning, and documentation. This phase is where many internal projects fail—they skip or under-resource this work. Consultant-led engagements that succeed allocate substantial effort to training teams on the new system, creating documentation that teams will actually reference, and managing resistance to change. Final weeks focus on production deployment, monitoring for issues, and knowledge transfer to your internal teams for ongoing operation and optimisation.

Scaling From Pilot to Enterprise Deployment

Many organisations approach generative AI as a series of disconnected pilots—one team experimenting with customer service automation, another with content generation, another with code generation. This approach creates technical debt, inconsistent governance, and missed opportunities for efficiency across the organisation. Scaling from successful pilots to enterprise deployment requires a different approach.

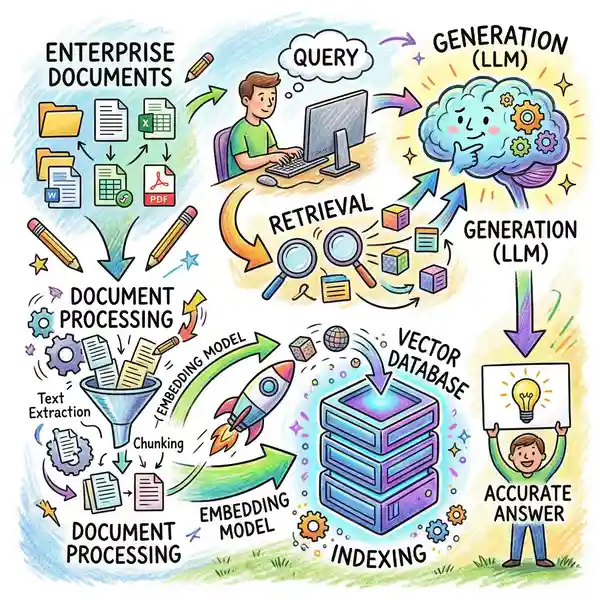

First, establish consistent governance across pilot teams. This means shared policies for data usage, output quality standards, model selection, and change management processes. When each team implements governance separately, organisations end up with incompatible standards that prevent sharing of infrastructure, templates, and learnings. Second, build a shared platform layer rather than standalone systems. Rather than each team implementing their own customer service chatbot or content generation system, build a platform that multiple teams can use, reducing implementation time for subsequent deployments from 8-12 weeks to 2-4 weeks.

Best Practice Approach

Organisations that succeed with generative AI at scale invest in governance and platform architecture early, even during pilots. This enables subsequent deployments to proceed faster, reduces total cost of ownership, and creates consistent user experience across teams.

Budget and ROI Expectations

Generative AI consulting engagements vary significantly in cost depending on complexity, scope, and integration requirements. A relatively simple customer service chatbot implementation with basic integrations typically costs £40K–£80K in consulting fees and 8–10 weeks of timeline. More complex implementations with significant system integrations, custom logic, and large-scale change management might extend to £120K–£200K. The cost premium reflects not additional complexity in building the AI system itself, but complexity in integrating it with your ecosystem and ensuring teams actually adopt it.

ROI expectations should be measured conservatively in the first year. A customer service chatbot reducing contact centre operating costs by 10–15% has annual savings of £50K–£150K depending on contact centre size. A content generation implementation might save £30K–£60K annually in copywriting time. Code generation tooling might accelerate development timelines by 3–5 weeks annually, translating to £40K–£100K in developer time savings. These are not transformative numbers, but they are real, measurable value that typically exceeds consulting cost within the first year if the implementation is executed properly.

Where organisations fail is in comparing actual, measured value to speculative value. Many organisations justify consulting investment based on optimistic scenarios—"our customer service chatbot will handle 80% of inquiries, saving £500K annually"—and then feel disappointed when results are 40% adoption producing £100K savings. These results represent genuine value, but disappointment occurs because expectations were misaligned with realistic delivery.

Frequently Asked Questions About Generative AI Consulting

Should we build AI capabilities internally or engage consultants?

The evidence strongly favours consultant engagement for your first deployment. Consultant-led implementations succeed at 67% compared to 33% for internal builds, primarily because consultants bring established patterns and change management expertise. After your first deployment, you will have internal capability for subsequent iterations. The optimal approach is consultant-led for initial implementations, then internal team building from there.

How do we avoid the common consulting engagement failure patterns?

Define success metrics before engagement begins—what does success look like in measurable terms? Ensure the consultant addresses integration complexity explicitly—do not accept "we will handle integration" without detailed planning. Budget generously for change management and user training. Insist on knowledge transfer so your teams understand how to operate the system after consultant handoff. Establish measurement processes so you can document value delivered.

What questions should we ask when evaluating consulting proposals?

Ask for references from similar implementations—do not accept generic references. Ask specifically about integration complexity and how they manage it. Ask about change management processes and user training. Ask what happens if the system does not achieve the success metrics you defined. Ask who will support the system after consulting engagement ends and what support model they offer. Ask how they handle scope changes if requirements become clearer during implementation.

How long before we see ROI from generative AI implementation?

If the implementation is executed well, you should see measurable value within 3–6 months of production deployment as teams adopt the system and develop confidence in it. Payback of consulting fees typically occurs within 12 months if the use case has clear operational benefits like cost reduction or time savings. Longer payback timelines suggest either the use case was not appropriate for near-term ROI, or the implementation is underperforming and needs remediation.

Should we try a low-cost pilot before engaging consulting for full implementation?

Low-cost pilots using DIY approaches can validate that the use case is appropriate for your organisation. However, low-cost pilots frequently create unrealistic expectations because they skip integration, change management, and measurement work that full implementations require. A better approach is a scoped consulting engagement (6–8 weeks) focused specifically on your highest-priority use case, which validates the use case rigorously whilst delivering production value.

What's the relationship between generative AI and other AI initiatives like predictive analytics?

Generative AI and predictive analytics serve different purposes. Predictive analytics answers "what will happen?"—predicting customer churn, forecasting demand, identifying fraud. Generative AI answers "what should we generate?"—content, code, customer responses. Many organisations benefit from both. However, generative AI projects have faster timelines and more immediate ROI potential, making them typically a better starting point if you are new to AI consulting.

Ready to move beyond pilot paralysis?

Consultant-led generative AI implementations succeed at double the rate of internal builds. Get clarity on your highest-value use cases, access proven deployment patterns, and deliver measurable business value within 8–12 weeks.

P3 AI Consulting Team

Generative AI Implementation Specialists, Whitehat SEO

The P3 AI Consulting team brings proven generative AI implementation patterns, pre-built integration libraries, and structured change management approaches that deliver measurable business value. We specialise in moving organisations from pilot experiments to production deployments that generate genuine ROI.

This article is part of the AI Consulting cluster. Read related content on AI readiness assessment, enterprise AI strategy, and AI capability building for your organisation.