UK AI Policy for B2B Marketers

The UK has no standalone AI legislation in 2026, but B2B marketers still face binding compliance obligations from three directions: escalating ICO enforcement of existing data protection law, the EU AI Act's extraterritorial reach affecting any UK business with European clients, and the Data (Use and Access) Act 2025 which came into force on 5 February 2026. Only 7% of UK businesses have embedded AI governance frameworks, yet 72% of AI-adopting companies already use it in marketing. This guide explains what UK marketing directors at mid-market B2B companies need to know, what to do, and which dates matter most.

Does the UK Have an AI Law in 2026?

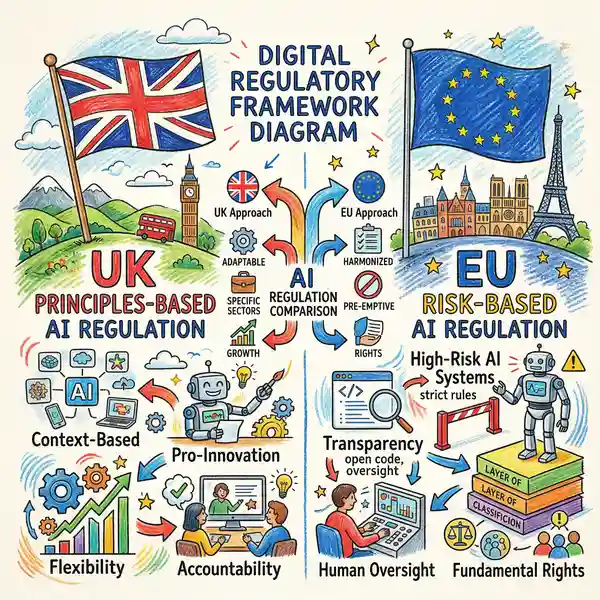

The UK government has deliberately chosen not to legislate on AI in a comprehensive way. Instead, it operates through a principles-based, sector-specific framework built around five core AI principles: safety and security, transparency and explainability, fairness, accountability and governance, and contestability and redress. Existing regulators, including the ICO, CMA and FCA, are tasked with interpreting and applying these principles within their own domains. This means there is no single AI rulebook to follow. Compliance requirements are spread across multiple regulators and multiple pieces of legislation, creating a fragmented landscape that demands careful navigation.

Key legislative milestones in 2026

The Data (Use and Access) Act 2025 (DUAA) received Royal Assent on 19 June 2025 and represents the most significant change to UK data law since Brexit. Its data protection provisions came into force on 5 February 2026. Critical changes for marketers include significantly relaxed automated decision-making rules under Article 22 UK GDPR, PECR fines raised to £17.5 million or 4% of global turnover (up from £500,000), and direct marketing explicitly listed as a legitimate interest.

A standalone UK AI Bill was promised in the July 2024 King's Speech but has not materialised. The government has confirmed it may appear in the spring 2026 King's Speech (scheduled for 13 May 2026), but this remains uncertain. If introduced, it is expected to be narrow: covering advanced AI models and AI-copyright provisions only.

Which UK Regulators Enforce AI Rules for Marketers?

Without dedicated AI legislation, three regulators are most relevant to B2B marketing teams. The Digital Regulation Cooperation Forum (DRCF), comprising the ICO, CMA, Ofcom and FCA, coordinates cross-regulator AI activity and provides multi-regulator guidance to innovators. Understanding each regulator's priorities and enforcement record is essential for compliance.

Information Commissioner's Office (ICO)

The de facto AI regulator for marketing. Published AI and Biometrics Strategy June 2025. Enforcement escalating: £19.6M in fines from 7 cases in 2025. Focus: transparency, bias, and automated decision-making.

Competition and Markets Authority (CMA)

Monitors AI competition risks and vendor relationships. From April 2025: direct consumer-protection enforcement powers with fines up to 10% of global turnover. Oversight of AI partnership consolidation.

Advertising Standards Authority (ASA)

No AI-specific rules. Existing advertising codes apply to AI-generated content. No blanket disclosure requirement, but marketers remain responsible for non-compliant AI outputs.

Recent ICO enforcement actions

The ICO's enforcement activity in 2026 reflects heightened scrutiny of AI deployments. On 24 February 2026, the ICO issued its largest children's privacy fine: £14.47 million against Reddit for collecting children's data without proper safeguards. On 5 February 2026, fines totalling £247,590 were issued to Imgur and MediaLab.AI for privacy violations. The ICO also won a significant jurisdiction appeal against Clearview AI, establishing UK enforcement reach over non-UK AI providers. These actions signal that the regulator will pursue AI-driven profiling and data misuse aggressively throughout 2026.

How Does the EU AI Act Affect UK Businesses?

The EU AI Act (Regulation 2024/1689) has explicit extraterritorial scope, meaning UK businesses cannot ignore it even after Brexit. It applies to any UK company placing AI systems on the EU market, any UK business whose AI output is used in the EU, and any UK organisation whose AI systems affect people within the EU. A UK marketing firm using AI chatbots accessible to EU users must comply. A UK content-generation tool used by EU-based clients triggers obligations. The August 2026 transparency deadline is critical for any company with European reach.

What is already in force

Since February 2025, prohibitions on "unacceptable risk" AI practices apply, including social scoring and manipulative AI. AI literacy obligations are already active: all organisations deploying AI must ensure staff have sufficient AI literacy. Since August 2025, rules for General-Purpose AI models apply with fines up to €35 million or 7% of global annual turnover.

UK vs EU comparison

| Requirement | UK Position | EU AI Act |

|---|---|---|

| AI-specific legislation | No (principles-based) | Yes (risk-based) |

| Automated decisions | Permitted with safeguards | Prohibited by default |

| AI content labelling | Not required | Required from Aug 2026 |

| Chatbot disclosure | Not required | Required from Aug 2026 |

| Maximum fines | £17.5M / 4% turnover | €35M / 7% turnover |

Source: EU AI Act (Regulation 2024/1689), UK Data (Use and Access) Act 2025

UK-EU data adequacy was renewed on 19 December 2025 for six years, meaning personal data continues to flow freely from the EU to the UK. However, data adequacy does not exempt UK businesses from EU AI Act obligations. These are separate compliance requirements that sit alongside data protection.

How Do AI Regulations Affect Your Marketing Technology Stack?

Every AI-powered tool in your marketing stack carries compliance implications. From CRM lead scoring to email personalisation to content generation, each tool processes personal data and many constitute profiling under UK GDPR. Understanding where your obligations lie is essential before investing further in marketing AI. The DUAA's February 2026 changes significantly relaxed automated decision-making rules, but only for non-special-category data processing within the UK.

CRM, automation and personalisation

AI-powered lead scoring, predictive analytics and customer segmentation in platforms like HubSpot and Salesforce involve processing personal data and often constitute profiling under UK GDPR. The DUAA's relaxation of automated decision-making rules is significant: CRM-based lead scoring that does not use special-category data (health, racial origin, political opinions) is now permitted with safeguards rather than prohibited by default. However, companies also serving EU customers must comply with the stricter EU GDPR position, where ADM remains prohibited unless narrow exceptions apply, creating a dual-compliance requirement.

The DUAA's increase of PECR fines to £17.5 million or 4% of global turnover makes email compliance a board-level risk. AI-powered send-time optimisation, subject-line testing and personalised content are profiling activities requiring a documented lawful basis and transparency. Direct marketing is now explicitly listed as a legitimate interest under the DUAA, though a Legitimate Interests Assessment remains required.

AI content generation tools

For content generation tools such as ChatGPT, Claude and Jasper, the primary data protection risk arises when personal data is inputted. There is currently no UK requirement to label AI-generated marketing content, though the EU AI Act Article 50 will require labelling from August 2026 for EU-facing content. Best practice recommends that all B2B companies prohibit uploading personal or confidential data to external AI tools without specific authorisation, and mandate human review of all AI-generated content before publication.

Ready to audit and strengthen your AI governance?

Explore AI Consultancy Services

How to Build an Internal AI Policy for Your Marketing Team

The ICO published its own internal AI use policy in August 2025, providing an authoritative model for UK businesses. It requires using only approved AI tools on approved devices, marking AI-generated outputs clearly, mandatory human review of all externally published AI outputs, and prohibiting the input of personal or confidential data into AI tools without authorisation. For mid-market B2B companies, a practical internal AI policy should cover these 13 core components:

Purpose, scope and definitions

Clear policy scope covering who it applies to and what counts as AI for your organisation.

Acceptable and prohibited uses

Approved tools, permitted purposes and explicit red lines for your marketing team.

Data handling rules

Prohibiting personal or confidential data input into unapproved external AI tools.

Human oversight requirements

Mandating review of all externally published AI outputs before launch.

Quality assurance

Accuracy checking, bias monitoring and intellectual property verification.

Transparency obligations

Internal and external labelling requirements for AI-generated content.

Procurement governance

DPIA requirements before deploying new AI tools in your marketing stack.

Training requirements

Mandatory AI literacy for all team members (already an EU AI Act obligation).

Governance structure

Designate a responsible person and maintain an AI tool inventory for audit purposes.

Incident reporting

Procedures for flagging AI errors, biases or data breaches for investigation.

Regulatory compliance mapping

Alignment with UK GDPR, DUAA and EU AI Act (if applicable to your business).

Disciplinary measures

Consequences for policy violations and non-compliance with approved tools and processes.

Quarterly review schedule

Regular updates to the policy as regulatory landscape and technology evolve.

Key Takeaway

An internal AI policy must balance innovation with risk management. Use the ICO's August 2025 template as your foundation, customise for your marketing operations, and commit to quarterly reviews as the regulatory landscape evolves.

What Do UK AI Adoption and Governance Statistics Reveal?

The gap between AI adoption and AI governance in UK businesses is one of the defining risks of 2026. Marketing teams are adopting AI tools faster than compliance frameworks can keep up. Understanding the scale of this gap helps justify investment in governance infrastructure.

7%

AI Governance Embedded

Fully embedded frameworks in UK businesses

72%

Marketing AI Adoption

Among AI-adopting companies

£17.5M

Maximum PECR Fines

Up from £500K under pre-DUAA regime

89%

Public Support

For independent AI regulator with powers

Sources: Trustmarque AI Governance Index 2025; DSIT AI Adoption Survey January 2026; Data (Use and Access) Act 2025; Ada Lovelace Institute Polling December 2025

The Trustmarque AI Governance Index 2025 found that 54% of UK businesses have minimal governance or none at all, while only 18% have implemented continuous monitoring with KPIs. Experian's Responsible AI report (November 2025) found 76% of business leaders admit putting responsible AI into practice "remains one of their biggest challenges." The gap is both a risk and an opportunity: companies building governance frameworks now gain a competitive advantage as regulators tighten enforcement later in 2026.

What Are the Critical Dates for AI Compliance in 2026?

The regulatory calendar for 2026 contains several milestones that will directly affect how B2B marketing teams operate. Three dates in particular require attention from every marketing director with responsibility for compliance.

18 March 2026: AI Copyright consultation response (completed)

The government published its response to the AI and copyright consultation on 18 March 2026. The government reversed its preferred approach and abandoned the proposed TDM exception combined with an opt-out mechanism. Instead, it adopted a "wait-and-see" position, maintaining the status quo with no immediate copyright reform. The creative industries' overwhelming opposition to the original proposal succeeded. For marketing teams, this means AI-generated content copyright rules remain unchanged for now, though pressure for reform will likely continue post-2026.

13 May 2026: King's Speech (confirmed)

The State Opening of Parliament is confirmed for 13 May 2026. If a UK AI Bill appears in the King's Speech, it will signal the direction of UK AI legislation for the remainder of the parliament. Expected to be narrow (frontier models and copyright only), but even a narrow bill would reshape AI tool procurement requirements. A bill introduced in May would not become law before late 2026 or early 2027 at the earliest.

2 August 2026: EU AI Act Article 50 deadline (critical)

Article 50 transparency obligations take effect, requiring chatbots and digital assistants to inform EU users they are interacting with AI. AI-generated text, audio, image and video must carry machine-readable marking. A draft Code of Practice on Transparency (December 2025) proposes multilayered marking including watermarking, metadata and a "Common Icon" visual label. The final code is expected by June 2026. Any UK B2B company with European clients or website visitors must be prepared by this date.

August 2026 Deadline Alert

Common mistake: Assuming that UK-only marketing exempts you from EU AI Act obligations. If your website is accessible to EU users, if any customer is based in the EU, or if you use AI in any content likely to reach EU audiences, Article 50 applies to you.

The reality: Failure to implement transparency marking by August 2026 can result in fines up to €35 million or 7% of global turnover. A transparent labelling strategy for all AI-generated marketing content is now a board-level risk for B2B companies with European reach.

2026 AI compliance timeline at a glance

| Date | Milestone | Impact on B2B Marketing | Action Required |

|---|---|---|---|

| 5 Feb 2026 | DUAA data protection provisions in force | PECR fines raised to £17.5M; ADM rules relaxed | Update lawful basis documentation; review lead scoring |

| 18 Mar 2026 | AI copyright consultation response | Status quo maintained; no TDM exception | No immediate change; monitor future reform signals |

| 13 May 2026 | King's Speech (possible AI Bill) | May reshape AI tool procurement requirements | Monitor for bill introduction; review vendor contracts |

| Jun 2026 | EU AI Act transparency code finalised | Details of labelling requirements confirmed | Begin implementing marking systems for EU content |

| 2 Aug 2026 | EU AI Act Article 50 transparency deadline | Chatbot disclosure + AI content labelling mandatory | Full compliance required for EU-facing marketing |

| H2 2026 | ICO statutory code on AI and ADM | New enforceable standards for AI decision-making | Complete DPIAs for lead scoring and personalisation |

Sources: UK Parliament, EU AI Act timeline, ICO 2025/26 action plan

Frequently Asked Questions

Does the UK have an AI law in 2026?

No. The UK has no standalone AI legislation in force as of April 2026. AI is regulated through existing laws including UK GDPR, the Data Protection Act 2018 and the Data (Use and Access) Act 2025, applied by sector regulators including the ICO, CMA and ASA. A UK AI Bill is expected but has not been introduced.

Do UK businesses need to comply with the EU AI Act?

Yes, if their AI systems are used in the EU, affect EU residents, or are placed on the EU market. This includes UK companies with EU-based clients, websites accessible to EU users with AI chatbots, and AI-generated content distributed to EU audiences. UK businesses must appoint an authorised EU representative for EU AI Act purposes.

Do I need to disclose AI-generated content in UK marketing?

Not under current UK law. There is no blanket requirement to label AI-generated marketing content in the UK. However, the EU AI Act Article 50 will require labelling from August 2026 for EU-facing content. The ASA advises disclosure where audiences could be misled without it.

Is AI lead scoring legal under UK GDPR after the DUAA changes?

AI-powered lead scoring using non-special-category data is now permitted by default under UK GDPR as amended by the DUAA, provided organisations inform individuals, enable challenge, and provide human intervention. Lead scoring using special-category data such as health or ethnicity remains subject to stricter controls. A DPIA is almost always required.

What should an internal AI policy for a marketing team include?

A practical marketing team AI policy should cover approved tools and prohibited uses, data handling rules preventing personal data in unapproved tools, mandatory human review of published outputs, procurement governance with DPIA requirements, AI literacy training, governance structure with a responsible person, and a quarterly review cycle. The ICO published its own AI policy in August 2025 as a model template.

How much can the ICO fine for AI-related violations?

The ICO can impose fines of up to £17.5 million or 4% of global annual turnover under UK GDPR. The DUAA raised PECR fines to the same level, up from £500,000. ICO enforcement escalated sharply in 2025, with fines reaching £19.6 million from seven cases, including a record £14 million Capita settlement and a £14.47 million Reddit fine in February 2026.

What percentage of UK businesses have AI governance?

Only 7% of UK businesses have fully embedded AI governance frameworks according to the Trustmarque AI Governance Index 2025. 54% have minimal governance or none. Just 18% have continuous monitoring with KPIs. Companies building governance frameworks now gain a competitive advantage: organisations with embedded governance report faster AI deployments and stronger accountability.

What enforcement actions has the ICO taken in 2026?

The ICO fined Reddit £14.47 million on 24 February 2026 for children's privacy violations—its largest children's data fine ever. On 5 February 2026, it issued fines of £247,590 to Imgur and MediaLab.AI. The regulator also won a significant appeal establishing jurisdiction over Clearview AI. These actions signal sustained enforcement against AI-driven data processing throughout 2026.

Key Takeaway

UK businesses face a fragmented AI compliance landscape created by multiple regulators and overlapping laws. The gap between AI adoption (72% in marketing) and governance (7% embedded) is a critical risk. May's King's Speech and August's EU AI Act deadline are the two most important dates on the 2026 compliance calendar.

Is your marketing AI strategy compliant?

Whitehat SEO's AI consultancy team helps mid-market B2B companies audit their marketing AI stack, build compliant internal policies, and prepare for the August 2026 EU AI Act deadline. As a HubSpot Diamond Partner, we ensure your CRM and marketing automation remain fully compliant with the newly-amended UK GDPR.

Clwyd Probert

SEO and AI Compliance Consultant, Whitehat SEO

Clwyd leads Whitehat SEO's AI governance and compliance practice. With expertise in UK GDPR, the EU AI Act and emerging AI regulation, Clwyd advises mid-market B2B companies on navigating complex compliance landscapes while maintaining marketing velocity. As a HubSpot Diamond Partner, Clwyd specialises in AI-powered CRM and marketing automation compliance.

Sources: AI Opportunities Action Plan (DSIT, January 2025); Data (Use and Access) Act 2025 (UK Parliament, Royal Assent June 2025); ICO AI and Biometrics Strategy (June 2025); EU AI Act (Regulation 2024/1689) (European Commission); AI Adoption Research (DSIT, January 2026); AI Governance Index 2025 (Trustmarque); AI and Data Protection Guidance (ICO)