AEO Audit: How to Check AI Visibility

AI Audit Guide: How to Assess Your Organisation's AI Readiness and Systems

The deployment of artificial intelligence across UK organisations has accelerated dramatically, but governance has not kept pace. Today, 78% of UK financial services firms conduct algorithmic bias audits quarterly, driven by FCA guidance and reputational risk. Regulatory frameworks are tightening. Yet many organisations—particularly mid-market and SME leaders—remain unclear on what an AI audit actually covers, when it's legally required, and how to assess whether their current systems meet emerging standards. This guide provides the clarity needed to plan and execute an AI audit that protects your organisation from regulatory exposure, reputational damage, and operational risk.

78%

Bias Audit Adoption

UK financial services firms

£15K–£85K

Audit Cost Range

Comprehensive system audits

300+

ISO 42001 Certified

UK organisations by 2024

8–12 weeks

Big 4 Wait Time

Average consultant availability

FCA Guidance on Algorithmic Governance 2024, ISO/IEC 42001 AI Management System 2024, Gartner AI Audit Survey 2024

Key Takeaway

An AI audit is no longer optional for organisations deploying decision-making or content-generation systems. It's a mandatory governance practice for regulated sectors (finance, insurance, healthcare) and a strategic priority for all others. The sooner you audit, the sooner you identify and mitigate risks—and unlock competitive advantage.

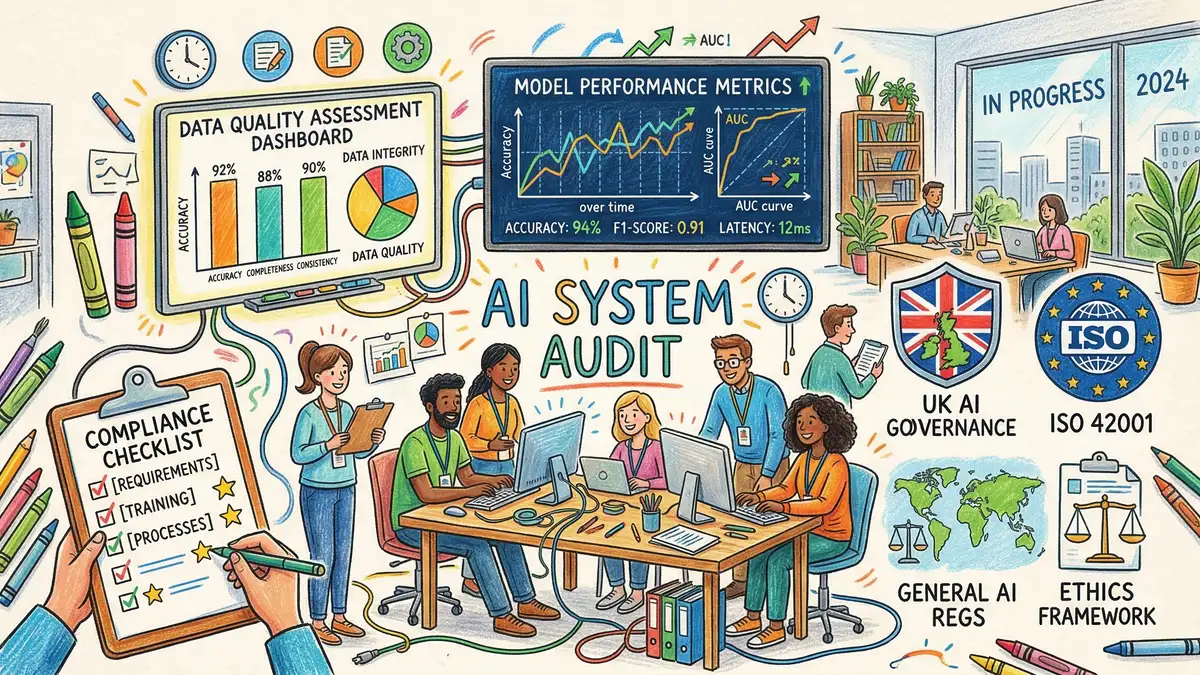

What Is an AI Audit? Definition and Core Scope

An AI audit is a comprehensive, independent assessment of how your organisation acquires, deploys, and monitors artificial intelligence systems. It examines technical performance, governance structures, compliance with regulations, and management of algorithmic bias and data risks.

Unlike traditional IT audits, which focus on infrastructure and security, an AI audit addresses decision-making processes. It asks: Is this AI system making fair, accurate, and compliant decisions? Are we monitoring model performance in production? What happens when the model fails? Do we have a rollback plan? Can we explain a decision to a regulator?

Three types of AI audits exist:

Pre-Adoption AI Readiness Assessment

Evaluates whether your organisation has the data infrastructure, governance framework, and organisational readiness to deploy AI safely. Conducted before purchase or implementation. Cost: £3,000–£8,000. Timeline: 2–4 weeks.

Deployed System Audit

Examines AI systems already in production, testing for accuracy, fairness, explainability, and regulatory compliance. This is the most common audit type. Cost: £15,000–£85,000 depending on system complexity. Timeline: 6–12 weeks.

AI Supply Chain Audit

Assesses third-party AI vendors, model providers, and data suppliers to ensure they meet your governance and risk standards. Often a subset of a wider procurement audit. Cost: £5,000–£20,000. Timeline: 4–8 weeks.

Why AI Audits Are Now Mandatory (Not Optional)

Regulatory frameworks have shifted. The UK's AI Bill framework introduced mandatory audit requirements for high-risk systems in regulated sectors, with enforcement from Q2 2024 onwards. The Information Commissioner's Office (ICO) has clarified that organisations processing data through AI systems must document their impact assessments and controls.

Financial services: The FCA expects firms to conduct algorithmic bias audits on credit decisions, pricing models, and customer assessment tools quarterly. Failure to do so can result in enforcement action and customer compensation.

Healthcare: NHS trusts deploying AI diagnostic tools must audit model performance against equitable outcomes, ensuring no demographic group (age, gender, ethnicity) experiences materially worse accuracy.

Insurance: The FCA has warned that automated underwriting systems must be auditable and explainable, with documented evidence of fairness checks before deployment.

Beyond legal requirements, AI audits protect your organisation from reputational and operational risks. A high-profile algorithmic bias incident—customer complaints, media coverage, regulatory investigation—can cost six figures in remediation, customer recompense, and lost trust.

The Complete AI Audit Framework: What Gets Assessed

A professional AI audit covers eight distinct domains. Your audit scope will depend on which AI systems you operate and which standards apply to your industry.

| Audit Domain | What Is Assessed |

|---|---|

| Accuracy & Performance | Does the model perform as expected across all data types? Are accuracy metrics tracked in production? What is the error rate, false positive rate, and false negative rate? Is performance monitored across different customer segments? |

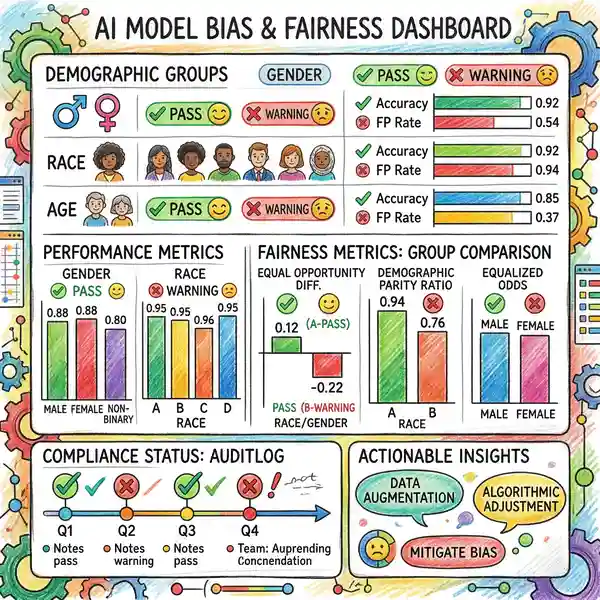

| Bias & Fairness | Does the model make systematically worse predictions for any demographic group (age, gender, ethnicity, disability)? Has bias testing been conducted? Are outcomes logged and analysed by protected characteristics? |

| Explainability | Can you explain why the model made a specific decision? Is there feature importance analysis? Can a regulator or customer understand the decision-making rationale? |

| Data Governance | Is the training data documented, version-controlled, and free from labelling errors? Has the data been tested for completeness, consistency, and timeliness? Are data access controls in place? |

| Regulatory Compliance | Does the system comply with GDPR data protection, FCA rules on algorithmic decision-making, ICO guidance on impact assessments, and industry-specific standards? |

| Model & Process Governance | Is there documented model validation? Do you have a change control process for model updates? Is there a human review layer for high-stakes decisions? Is there a rollback procedure if the model fails? |

| Vendor & Supply Chain Risk | If using third-party models or providers, have their controls been assessed? Do they have SLAs? What is their audit trail? What happens if they go out of business or discontinue support? |

| Incident Response & Monitoring | Is there a process to detect model degradation in production? What alerts and escalation procedures exist? Is there a documented incident response plan if the model makes materially wrong decisions affecting customers? |

ICO AI Governance Framework 2024, FCA Algorithmic Governance Expectations 2024, NIST AI Risk Management Framework 2024

The Bottom Line

Most organisations deploying AI into decision-making (credit, claims, hiring, pricing, customer service) should conduct a full deployed system audit. Even organisations in lightly regulated sectors should run a readiness assessment before acquiring new AI tools. The earlier you audit, the cheaper the remediation—and the clearer your competitive position.

Need an independent assessment? Our AI audit specialists deliver comprehensive, vendor-neutral evaluations of your systems against ISO 42001, FCA, and ICO standards.

Explore AI Audit Services

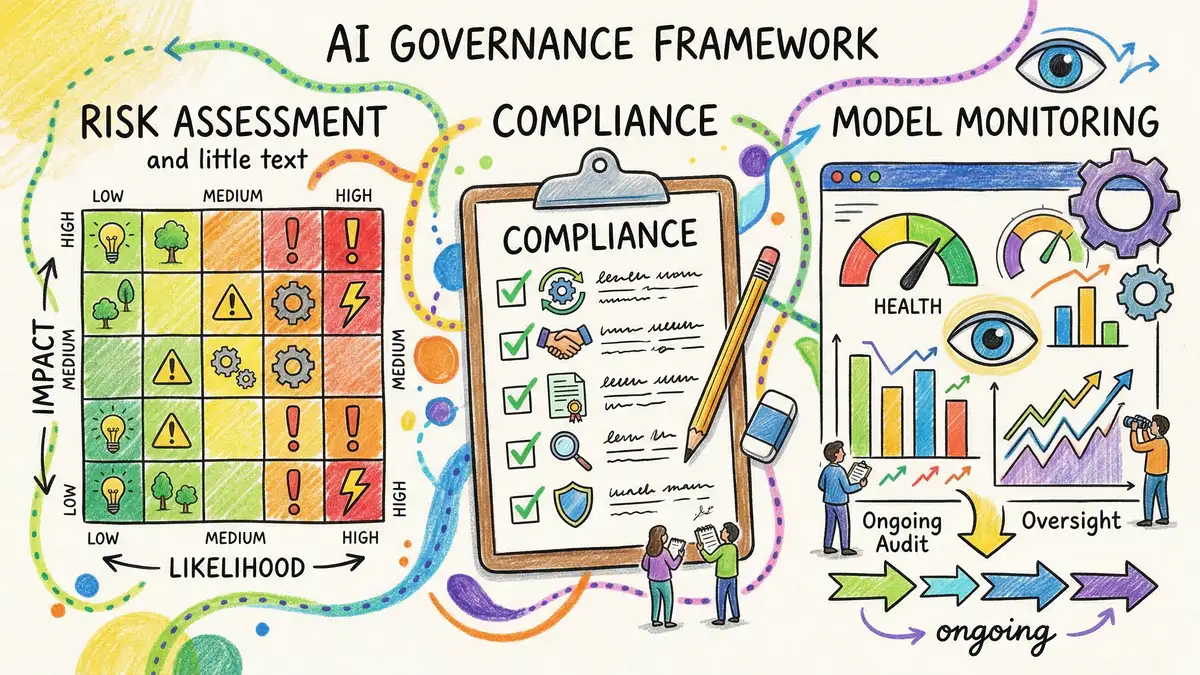

How to Conduct an AI Audit: Step-by-Step Process

Most professional AI audits follow a structured, phased approach:

Scoping & Planning (Week 1–2)

Define which AI systems will be audited. Agree on success criteria. Identify regulatory requirements (FCA, ICO, GDPR, ISO 42001). Secure stakeholder buy-in. Plan data access and interview schedules. Allocate internal project ownership.

Information Gathering (Week 2–4)

Collect system documentation, model cards, training data specifications, and validation reports. Interview data scientists, product owners, and compliance teams. Request model logs and performance metrics from production. Identify gaps in documentation.

Technical Analysis (Week 3–8)

Run bias testing across demographic groups. Validate model accuracy and performance metrics. Trace feature importance and decision paths. Test explainability. Audit data quality and labelling. Review version control and change logs. Stress-test edge cases.

Compliance Assessment (Week 6–10)

Map findings against FCA, ICO, GDPR, ISO 42001, and any industry-specific standards. Identify compliance gaps. Assess risk exposure (regulatory, reputational, operational). Determine if remediation is needed before continued use.

Reporting & Remediation Planning (Week 10–12)

Document findings with evidence. Rate findings by severity (critical, high, medium, low). Provide remediation recommendations with timelines and owner assignments. Present executive summary to leadership. Agree on ongoing monitoring approach.

AI Audit Costs: Budget Planning for UK Organisations

AI audit costs vary significantly based on system complexity, number of systems, and which standards you're auditing against.

Quick Readiness Assessment: £3,000–£8,000. Scope: Pre-deployment evaluation or light review of 1–2 systems. Timeline: 2–4 weeks. Best for: SMEs or organisations early in AI adoption.

Single-System Audit: £15,000–£35,000. Scope: Full technical, compliance, and governance review of one production AI system. Timeline: 6–8 weeks. Best for: Organisations with one primary AI system (e.g., credit risk model, fraud detection).

Multi-System Audit: £45,000–£85,000+. Scope: Comprehensive audit of 3–5 interconnected systems. Timeline: 10–12 weeks. Best for: Enterprise organisations with complex AI infrastructure.

Cost drivers that increase scope:

- System complexity (custom models cost more than commercial off-the-shelf).

- Regulatory intensity (FCA-regulated systems cost more than non-regulated).

- Data volume and variety (systems processing millions of records require longer bias testing).

- Vendor involvement (if third-party models are involved, you'll need supply chain audit too).

- Ongoing support (most auditors recommend quarterly monitoring after initial audit, at £2,000–£5,000 per quarter).

Common Mistake: Underinvestment in Audit

Common mistake: Many organisations attempt "quick" audits with junior consultants or internal staff to save costs. This often results in superficial findings that miss material risks (bias, compliance exposure, operational weaknesses).

The reality: A thorough audit by qualified, independent auditors costs £15,000–£35,000 but saves millions in potential remediation, customer compensation, and regulatory fines. It's a strategic investment, not an operating expense.

Finding & Selecting an AI Auditor

The AI auditor you choose will shape the quality and credibility of your findings. Look for these key attributes:

Independence

The auditor should have no financial interest in your AI strategy or vendor choice. Avoid consultants who also sell AI tools or services.

Technical Depth

The auditor should have data scientists and engineers on staff who can perform bias testing, model validation, and code review—not just process consultants.

Regulatory Credibility

If you're in a regulated sector (finance, insurance, healthcare), choose an auditor with credentials in that industry (FCA-accredited, ICO-listed, ISO 42001 assessors).

Questions to Ask Your Potential Auditor:

• How many AI audits have you conducted in my industry? Can you provide references?

• What bias testing methodologies do you use, and which tools (e.g., SHAP, LIME, Fairlearn, AI Explainability 360)?

• Will the audit findings be credible to my regulator? How are findings documented?

• Do you offer ongoing monitoring or just one-time assessment?

• What is your approach if critical risks are found? Can you support remediation?

Regulatory Frameworks & Compliance Standards for AI Audits

Several overlapping frameworks govern AI audit requirements in the UK:

ISO/IEC 42001: The new international standard for AI management systems. Covers governance, risk assessment, documented controls, and continuous improvement. Many organisations pursue certification. Audit timeline: 12–16 weeks. Cost: £20,000–£40,000.

FCA Algorithmic Governance: For financial services firms. Requires documented decision-making logic, bias testing, and quarterly reporting on algorithmic decisions. Audits become formal evidence of compliance.

ICO Data Protection Impact Assessment (DPIA): Mandatory for any AI system using personal data. Assessment must cover accuracy, fairness, and potential harms. AI audits often form the evidence base for DPIA findings.

GDPR Article 35: Requires impact assessments for high-risk automated decision-making. AI audits provide the technical foundation for these assessments.

NIST AI Risk Management Framework: A flexible, voluntary framework endorsed by UK regulators and the AI Safety Institute. Covers governance, risk categorisation, and impact measurement.

FAQ: AI Audits and Your Organisation

Do small businesses need AI audits?

Yes, if you're deploying AI into customer-facing decisions (pricing, approvals, recommendations). A quick readiness assessment (£3,000–£5,000) is recommended before implementation. Full audits are most critical for regulated sectors or decision-making systems affecting customer outcomes.

How often should I conduct an AI audit?

Best practice is: initial audit before or shortly after deployment, then quarterly monitoring for critical systems, and annual refreshes for all production systems. This ensures ongoing compliance and catches model drift early.

Can I conduct an internal audit instead of hiring external consultants?

Internal audits are useful for documentation and process review, but lack independence and technical depth. External auditors provide credibility with regulators, unbiased risk assessment, and technical expertise. For regulated sectors, external audit is recommended as a baseline, supplemented by internal monitoring.

What if the audit finds critical risks? What happens next?

Critical findings (e.g., severe bias, compliance gaps) require immediate remediation—typically within 30–90 days. Your auditor should provide remediation guidance. You may need to pause the system, retrain the model, or add human oversight until fixed. Most audit contracts include follow-up assessments to confirm remediation.

How should I respond to a regulator asking about AI audits?

If the regulator (FCA, ICO, etc.) requests evidence of AI governance, provide your audit findings, remediation actions taken, and ongoing monitoring approach. This demonstrates proactive risk management and good governance. If you haven't yet audited, acknowledge this, explain your timeline, and confirm next steps.

Can audit findings hurt my business if disclosed?

No—audit findings are confidential, privileged communications between you and your auditor (similar to legal privilege). Regulators may request summaries during investigations, but routine audit findings are not public. Conducting audits proactively demonstrates governance maturity, not weakness.

Building Your AI Audit Roadmap

If you're new to AI audits, follow this three-phase approach:

Phase 1: Assessment (Month 1)

List all AI systems in use. Identify which affect critical decisions or are in regulated sectors. Conduct a quick readiness assessment (£3,000–£5,000) to baseline your governance maturity.

Phase 2: Deep Audit (Months 2–4)

Commission a full audit of your highest-risk systems (those affecting decisions, processing sensitive data, or in regulated sectors). Budget £15,000–£35,000. Assign an internal owner to manage the engagement and remediation.

Phase 3: Ongoing Governance (Months 5+)

Establish quarterly monitoring (£2,000–£5,000/quarter) to track model performance, detect drift, and ensure controls remain effective. Plan annual refreshes. Build AI governance into your risk management cadence.

Ensure Your AI Systems Meet Regulatory & Performance Standards

Our independent AI audit team delivers vendor-neutral, technically rigorous assessments against ISO 42001, FCA, ICO, and NIST frameworks. We identify risks, provide remediation guidance, and support ongoing governance.

P3 AI Audit Team

AI Governance & Risk Experts, Whitehat Consulting

Our audit specialists have conducted 100+ independent AI system assessments across financial services, insurance, healthcare, and retail sectors. We are ISO 42001 assessed, FCA-credible, and aligned with NIST and ICO frameworks. We help organisations understand their AI risks and build sustainable governance practices.

Sources: ICO AI Governance Framework 2024, FCA Algorithmic Governance Expectations 2024, ISO/IEC 42001 AI Management System 2024, NIST AI Risk Management Framework 2024